Chatbots are a fundamental part of most development projects and therefore a key element of the (under development) BESSER low-code platform. We needed a solution that met our requirements for the easy definition and subsequent generation and execution of chatbots. In this context, we started working on the BESSER Bot Framework (BBF), and we are excited to have launched the first stable release!

These are some highlights of BBF:

- BBF follows a state machine formalism to enable the creation of all types of bots. Transition among states can be triggered by the intent detection mechanism embedded in the framework

- Python as programming language. BBF is an internal DSL that can be extended with any Python library. We wrap all bot complexities, no need to be an expert in bot languages (nor even a Python expert) to build your bots. But, at the same time, if you know Python, feel free to leverage all your Python expertise when building the bots

- NLP capabilities: Intent Recognition, Named Entity Recognition (and soon Speech Recognition for voice bots), multilingual support (tested with English, Spanish, French, German, Catalan and, partially, Luxembourgish) are all embedded in the core of the bot framework, no need to connect to external NLP Engines.

- Released as Open Source Software and available on GitHub.

BBF is the result of all we learned about building chatbots in the past. Indeed, all those lessons learned have been integrated in this new project. For instance, from our previous bot framework, we realized that we needed to provide easy integration with AI components, mainly NLP ones. To achieve that, we decided to move to Python, since the ML community is mainly based there. BBF is created with a philosophy of easy integration with the state-of-the-art AI tools. We also learned that, instead of creating an external DSL for the definition of chatbots, it is wiser to move to a general-purpose programming language and take advantage of its capabilities to avoid reinventing the wheel. We needed a syntax-friendly and popular language to not restrict the amount of potential users. Again, Python is the best candidate for our internal DSL. We released BBF in the form of a Python library, so it can be installed with pip install besser-bot-framework.

BESSER Bot Framework: A brief overview

The BESSER Bot Framework documentation contains everything you need to know about creating chatbots with BBF. A first tutorial for beginners, a Wiki explaining the different components of a chatbot, some example chatbots and an API reference. Here, we will make a brief explanation of the most important ideas.

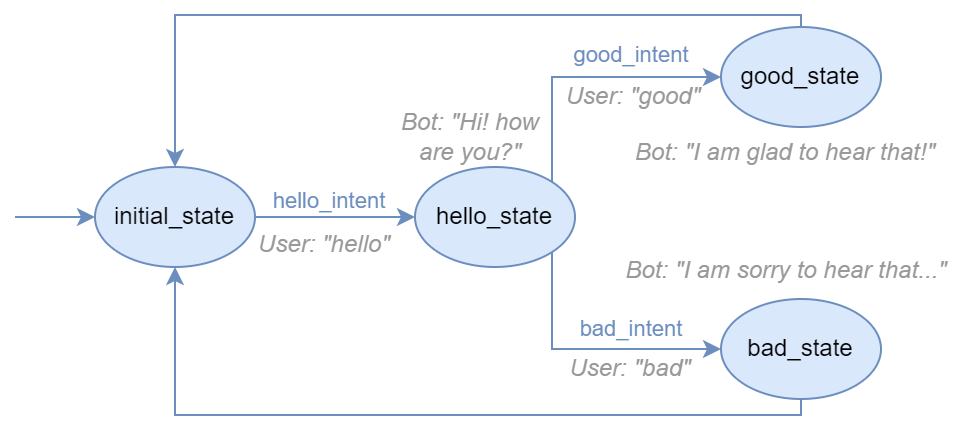

Simple example model of a bot that greets the user and replies according to the user mood.

The chatbot model is a state machine, composed by states and transitions between states. When a user starts an interaction, it is located in the initial state of the chatbot. Transitions are triggered by events, and a very common event in chatbots is the matching of the user utterance (i.e. the text entered by the user) with one of the bot intents.

An intent refers to the goal or purpose behind a message or query. When designing a bot, you need to create also the intents the chatbot is expected to recognize. We need to give some example sentences for each intent, so the bot can learn how a message of each intent looks like. Additionally, intents can embed parameters that are captured by the bot (e.g., numbers, dates, names, etc.). The internal NLP engine of the chatbot is responsible for guessing the user intent and finding the message’s embedded parameters.

All this and more can be done with Python by using the BBF library! But the coolest feature is the state bodies. Each state has its own body, which is a Python function that the bot runs when a user moves to its state. Additionally, states have a second body, called fallback, used in emergencies where some error occurs in the body (this is a nice place to query a LLM 😉, see beow)

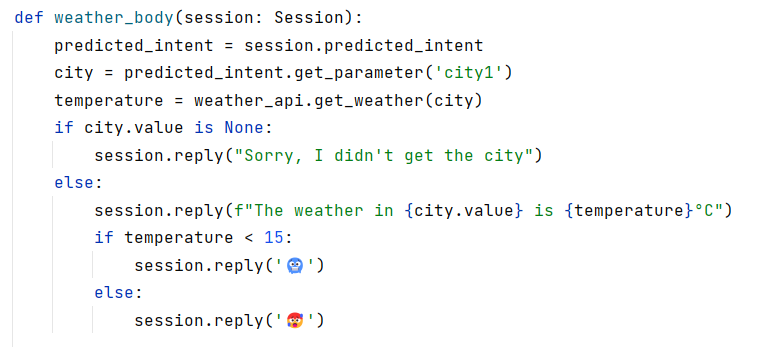

Example body function of a bot state, returning the weather of the asked city

This example body will be executed after a user asks “What is the weather in CITY?”, being “CITY” any city name. As you can see, bodies have a Session parameter. This is where private user information is stored. Therefore, bodies have read/write access to the user session that triggers that execution of the body. Forget about having to integrate multiple tools, frameworks or languages. Anything can be done within a chatbot state!

BBF chatbots can communicate with users through third-party communication channels available via platforms. Platforms are another key component of chatbots. A platform is a wrapper to send/receive data from user to bot. We implemented a platform for Telegram, so you can interact with your chatbots through it. We also created a WebSocket platform that allows the chatbot to be accessible from any user interface that implements a WebSocket protocol (we also implemented a UI with Streamlit for the WebSocket Platform, but you can create your own for your chatbots). More platforms will be available soon! (you can leave your requests in the GitHub issues section or contribute to the growth of BBF, it is open source 🙂)

But do we still need to create chatbots when we have LLMs? Or LLMs are not the cure for cancer?

The rise of chatbots is greater than ever, thanks in part to the incredible popularity and capabilities of Large Language Models (LLMs). They have reached the mainstream public, performing very well in a wide range of tasks such as open domain question answering, coding or text summarization. These LLMs are huge neural networks, trained with millions of texts from the Internet, that learn to predict the best answer to the user inputs. Despite their popularity and performance, they are still not the cure for all problems:

- LLMs suffer from hallucinations. It causes some responses to be false or inaccurate, often in a convincing and coherent manner, despite lacking a factual basis.

- For data-driven applications, there is the risk of data leakage. If the model has been trained with sensitive data, there is always the possibility to get it through the appropriate prompts.

- There is debate about the authorship of the generated content. Does it belong to the LLM owner, to the author of the data with which it has been trained, or to the user who created the appropriate prompt to generate the content?

- LLMs are getting bigger. For instance, Meta’s Llama-2 70 billion parameters LLM takes up 140 GB. If you want to integrate it in your tech stack, you would need a lot of computational resources or to hire some cloud services.

- LLMs are black boxes. It is really hard to fully understand the behavior or reasoning followed by a LLM to generate a response. There is even a scientific field that works on solving this issue, called Explainable AI.

- A LLM only generates text. To include other features in your app (e.g., DB queries, API requests, etc.) you need to integrate your LLM with other software tools (e.g., LangChain tries to solve this)

These weaknesses mean that LLMs are not the appropriate solution for all applications. On the other side, our chatbots avoid these problems:

- You design the bot architecture. You decide what the bot does and what it doesn’t, what it says and what it doesn’t. Avoid hallucinations and black box behaviors!

- Your data can be safely stored and retrieved when needed by the authorized users through the chatbot. Full data and privacy control!

- Create small chatbots (in terms of memory), extremely portable, reusable and scalable.

- And you can still integrate other tools (even LLMs!), everything possible with Python! For instance, you can connect a LLM to the default fallback state so that the bot tries to answer via an LLM when it does not recognize the user question (remember to warn the user though!)

We invite you to check out the documentation to learn more about the BBF. If you found this project useful, don’t forget to give us a star on GitHub 🌟 and stay tuned for incoming updates!

Featured Image by Catalyststuff on Freepik

Software Engineer at Luxembourg Institute of Science and Technology (LIST)